WE SEE IDEAS & TRANSFORM THEM PREMIER WEB DEVELOPMENT AGENCY

Lounge Lizard is a top web development company specializing in custom built technology-forward websites that are fast and responsive, plus get results. With our unique mix of coding specialists, we are able to blend creativity and originality with robust, task-oriented APIs designed to optimize your user’s journey. During the development of a website, our team uses their vast experience in HTML, CSS, and a wide variety of advanced programming languages to bring your website to life.

Solutions for Every Type of Business

No business is the same—that’s why we have provided website development solutions to over 600 organizations from e-commerce, b2b, b2c, and non-profits meeting each specific company’s needs. When it comes to custom website development services, Lounge Lizard’s dev team leads the industry.

What You Get

Over 80% of potential customers find products and services online — either through search engine results, online ads, social media, or directories. As your website development company, we do everything in our power to provide fully working, rigorously tested sites that surpass the needs of our clients while increasing their clientele.

Website Development Services

For full-stack solutions, our tech wizards are fluent in sites written in JavaScript, PHP, and Python + have an excellent grasp of the latest HTML and CSS standards.

Responsive Website Development

By optimizing processes like image compression and caching setup and configuration, our specialists can increase your site’s performance by 15 to 50%.

E-commerce Website Development

Your website should serve as more than just an electronic brochure. It should motivate customers to take action and give them the confidence to buy.

Custom WordPress Development

Our team will customize your WordPress site to be unique and tech-savvy, while delivering a site that can be maintained without extensive coding knowledge.

Shopify, Laravel, Magento

Using Shopify, Magento, Laravel, or others, our dev team ensures your customers have a great user experience that inspires repeat visits and drives sales.

App Development

Our engineers design and develop best-in-class web apps that are user-friendly, intuitive, and capture and implement our client’s goals for new and legacy app solutions.

Advantages of a Web Development Agency by the Numbers

Here are just some of the results we have realized as an internet advertising agency with a potent mix of expertise, creativity, and online marketing knowledge, plus a commitment to understanding each client’s business and competitive advantages.

Why You Should Choose Lounge Lizard for Web Development

Full-Service Website Development

As a professional website development company, we interpret your vision and user journey into a fully realized digital entity.

Custom 3D Configurations

We engineer powerful 3D configurations that bring product personalization that boosts customer engagement and closes sales.

Responsive Mobile

Using the latest in AMP technology, we create webpages that load faster and perform better on handheld devices, PCs, and laptops.

Terrific Value

For the website development price, your business receives outstanding value on your new site as its created, tested, and deployed.

Website Maintenance

Our website maintenance plans include everything your website requires, from minor content updates to extensive design upgrades.

Hosting Services

By hosting your website with Lounge Lizard, your website enjoys security and ongoing maintenance at a very cost effective rate.

Lounge Lizard Awards

About Website Development Services

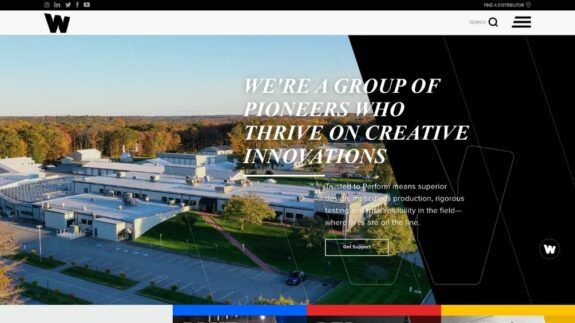

FEATURED WORK

Whelen

HIVE

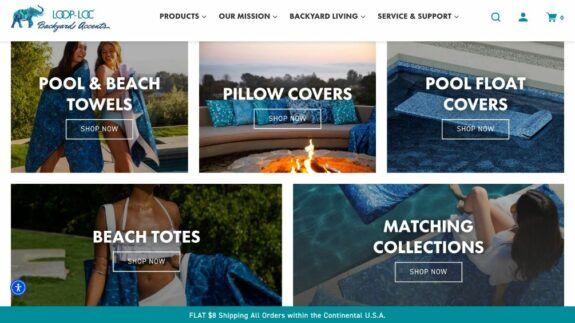

LOOP-LOC Backyard Accents

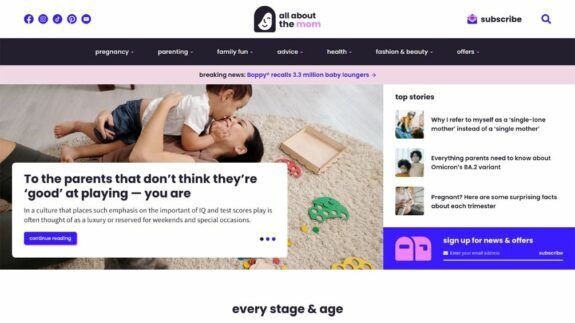

All About The Mom

Blue Owl

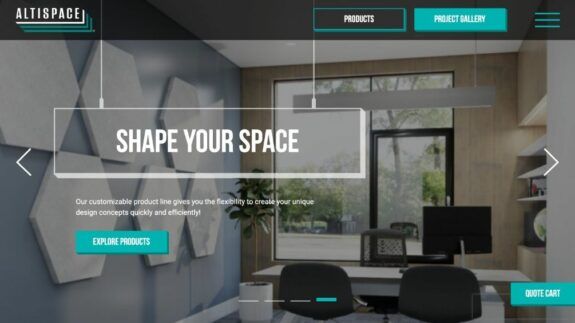

Altispace

Website Closers

Twisthink

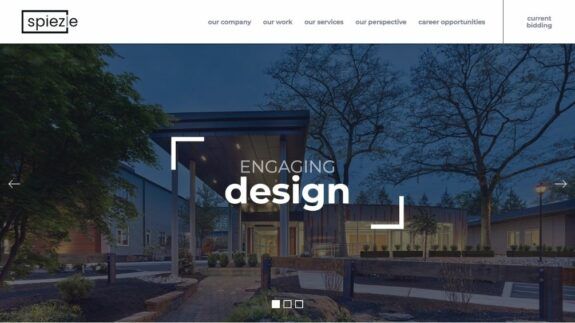

Spiezle Architectural Group

Physicians’ Reciprocal Insurers

Spectrum Retirement

Energy Infrastructure Partners

Our Clients

Web Development Process

1Project Launch

2Refreshing Brand

3Wireframing & Prototyping

4Design Creation

5Content Creation

6Site Development

7Testing

8Launch

What Clients are Saying About Us

Lounge Lizard is a professional, quick-learning, creative, responsive, and smart firm. They quickly understood the complexities of our business and applied their talent to addressing our website design and functional needs. We are quite pleased with their work, and we have twice renewed our annual maintenance and support agreement with them.

Lounge Lizard is a professional, quick-learning, creative, responsive, and smart firm. They quickly understood the complexities of our business and applied their talent to addressing our website design and functional needs. We are quite pleased with their work, and we have twice renewed our annual maintenance and support agreement with them.

We have extensive experience in the following:

FAQs

Request a Web Design and Marketing Proposal.

"*" indicates required fields